This is on top of the visible restraint in CHPT's operating expenses at $110.46M (in line QoQ/ +8.3% YoY), resulting in adj. By the latest quarter, we are already seeing exemplary improvements in its gross margins to 23.5% (+1.9 points QoQ/ +8.7 YoY). EBITDA by the end of CY2024, or the equivalent of FY2025. In addition, the management remains committed to positive adj. However, we believe the pessimism is unwarranted, since the charging company is expected to record a top-line growth of +45.8% through FY2026, compared to the hyper-pandemic levels of +48%. Therefore, it is unsurprising that CHPT's valuations have been impacted, moderating to NTM EV/ Revenues of 3.92x at the time of writing, down from its 2Y mean of 16.15x.

These optics point to TSLA's growing dominance in the EV charging space, in our view. With CHPT similarly jumping on the bandwagon by offering connectors for TSLA's charging standard, it appears that the deal is sealed, relegating the NACS connector as the accepted convention for most EV charging moving forward. Unfortunately, the stock has been further impacted by the Supercharger collaboration between Tesla ( TSLA), Ford ( F), and General Motors ( GM), triggering mixed sentiments for many EV charging stocks, CHPT included, due to the potential impact on its adoption and top/ bottom line. However, we believe that the market had overreacted, due to the management's guidance of positive Free Cash Flow generation by the end of FY2024. The stock had been sold off then, attributed to the double misses in the FQ4'23 earnings call and softer FQ1'24 guidance. We previously covered ChargePoint ( NYSE: CHPT) in March 2023. PeopleImages/iStock via Getty Images The EV Charging Investment Thesis Has Been Boosted By Recent Big Auto Development

0 Comments

For some people, mental health problems become complex, and require support and treatment for life.įactors like poverty, genetics, childhood trauma, discrimination, or ongoing physical illness make it more likely that we will develop mental health problems, but mental health problems can happen to anybody. Most of the time those feelings pass, but sometimes they develop into a mental health problem like anxiety or depression, which can impact on our daily lives. We all have times when we feel down, stressed or frightened. The earlier we are able to recognise when something isn’t quite right, the earlier we can get support. When we feel distressed, we need a compassionate, human response. It could be something at home, the pressure of work, or the start of a mental health problem like depression. It can fluctuate as circumstances change and as you move through different stages in our lives.ĭistress is a word used to describe times when a person isn’t coping – for whatever reason. Our mental health doesn’t always stay the same. Play a full part in your relationships, your workplace, and your community.If you enjoy good mental health, you can: When we think about our physical health we know that there’s a place for keeping ourselves fit, and a place for getting appropriate help as early as possible so we can get better. When we enjoy good mental health, we have a sense of purpose and direction, the energy to do the things we want to do, and the ability to deal with the challenges that happen in our lives. Mental health is the way we think and feel and our ability to deal with ups and downs. Have an idea how you can work with others to make your workplace more mentally healthy for everyone.Have an idea of how to reach out to a colleague in distress.

Have an idea of how to manage your own mental health at work.Addressing wellbeing at work increases productivity by as much as 12%. Good mental health at work and good management go hand in hand and there is strong evidence that workplaces with high levels of mental wellbeing 4 are more productive. We also believe in the role of employers, employees and businesses in creating thriving communities. We believe in workplaces where everyone can thrive. A toxic work environment can be corrosive to our mental health. It’s vital that we protect that value by addressing mental health at work for those with existing issues, for those at risk, and for the workforce as a whole. The value added to the economy by people who are at work and have or have had mental health problems is as high as £225 billion per year, which represents 12.1% of the UK’s total GDP. Sometimes it’s something else – our health, our relationships, or our circumstances. We all have times when life gets on top of us – sometimes that’s work-related, like deadlines or travel. Having a fulfilling job can be good for your mental health and general wellbeing. It is where we spend much of our time, where we get our income and often where we make our friends. You can read the guide below, download it as a PDF or buy printed copies in our online shop.įor many of us, work is a significant part of our lives. This guide provides you with tips on how to look after your mental health at work.

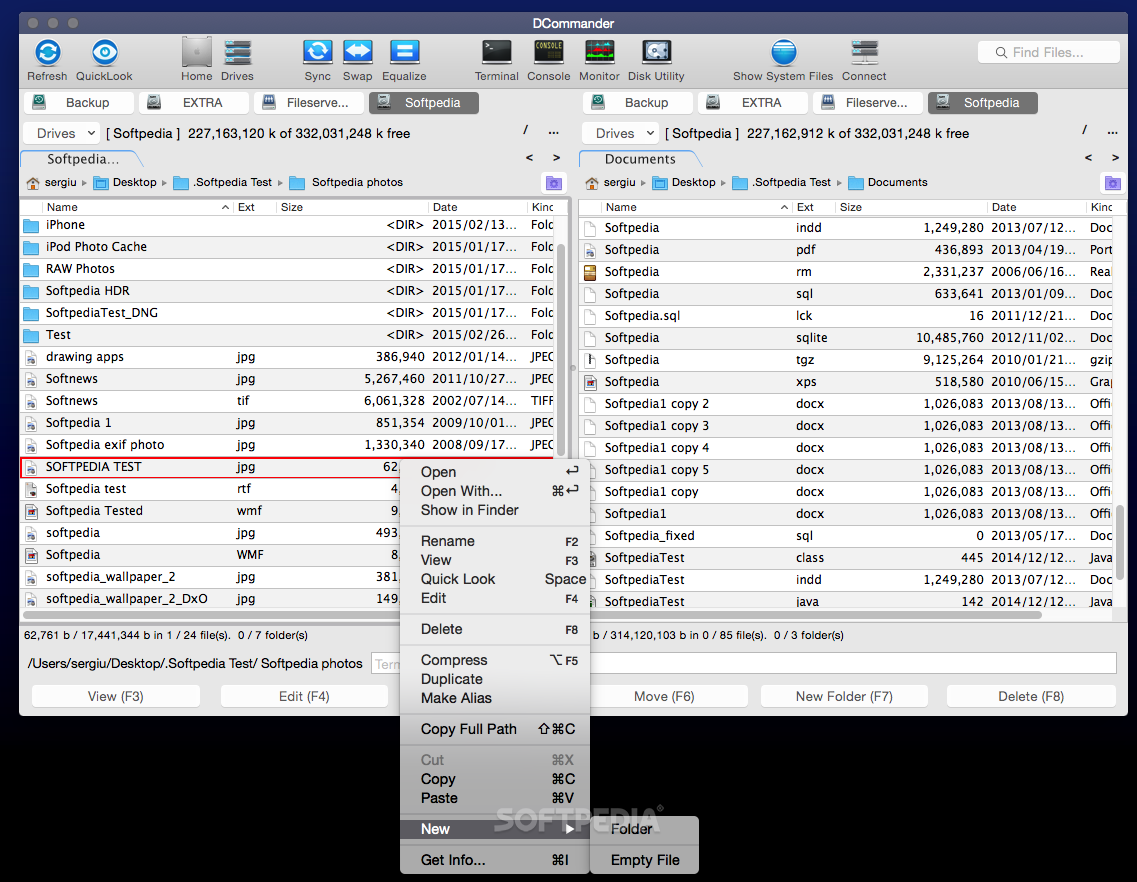

Devstorm DCommander Full activated, crack program Devstorm DCommander. To see the complete file name of all files without manually adjusting column width. Approved as a Mac alternative to the famous Total Commander, this software will. Copy and move file one by one, no matter how many times you press copy/cut/paste shortcut without waiting for previous operations to complete. I know already is available, but it is buried in Other section. Press Command + X to cut, and press Command + P to paste. I'm suggesting instead of, because sometimes folders contain '.' and use of would trim part of the full name.

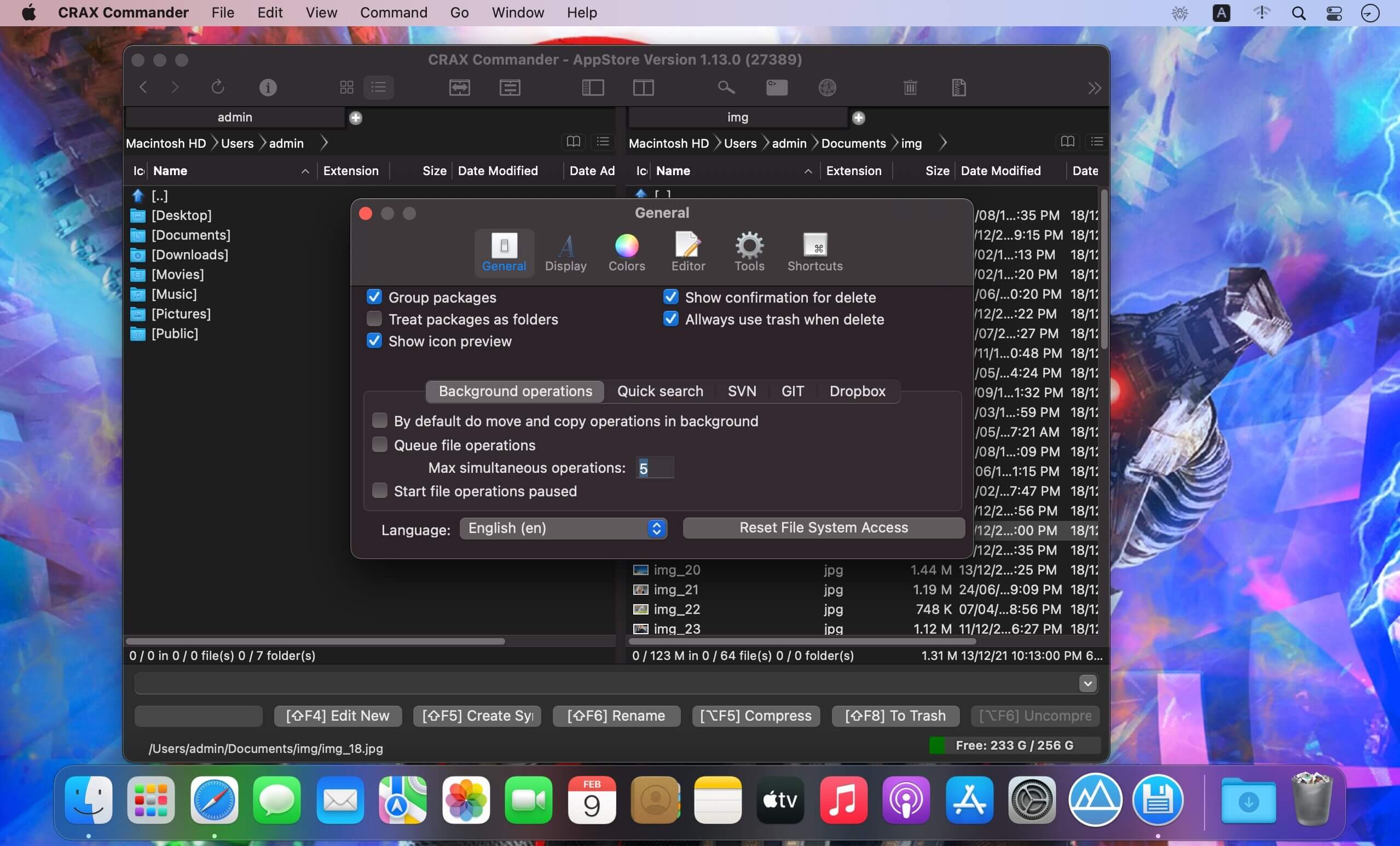

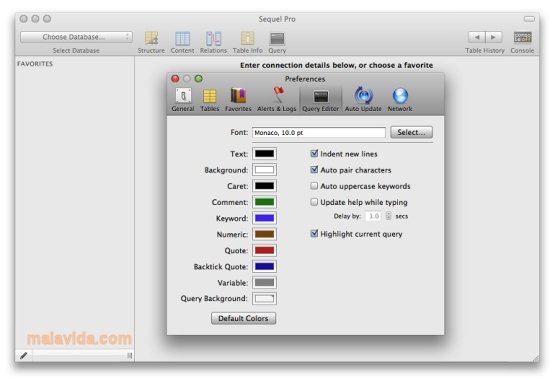

to recreate, revive, become one with, and/or convene with the physically dead and thus. It can be done by including " Full name" button in the UI. Speculative Returns and the Black Fantastic Michelle D. So what if we just enable users to instruct NC to do what is needed. The best alternative is Double Commander, which is both free and Open Source. I fully agree that there's no "right" answer and I also think that complex solution will only shoot us (you) in the leg in the long run. So there's no "right" answer to this issue, unfortunately. However, this can't work all the time - you can select some items with extensions and some other items without extensions simultaneously, so they simply can't be processed uniformly. It's possible to add some additional checking and switch to "" pattern when all source items have no extension. "put file name", "put '.' sign", "put file extension".Įxtensions are empty in provided example, that's right, but "." sign in pattern string is not going anywhere. Ultimate File Manager in 2023 by cost, reviews, features, integrations, deployment, target market, support options, trial offers, training options, years in business, region, and more using the chart below. Well, in current screenshot, the Batch Rename does exactly what it's asked to do: What’s the difference between CRAX Commander and Ultimate File Manager Compare CRAX Commander vs.   Update 2021: Sequel Ace is a good similar alive alternative: (Credits to Maciej Kwas's answer)Īll the other solutions here are recommending changing your DB settings (making it less secure, as advertised by MySQL) for the tool you are using. Update 2020: Sequel Pro is officially dead but unofficially alive! You can find the " nightly" builds that don't have this issue (i.e.

Don't downgrade your DB because of a tool. There is a fork of SequelPro called SequelAce that seems to be pretty stable and up-to-date, it keeps similar functionality, similar look and feel, yet at the same time it is devoid of old Sequel Pro problems The my.cnf file is located in /etc/my.cnf on Unix/Linuxįor those who is still struggling with Sequel Pro problems: Sequel Pro was a great product, but with tons of unresolved issues and last release being dated to 2016 perhaps it's a good idea to look for some alternatives. Do it only on fresh installs, because you may lost your db tables otherwise. Quick fix (destructive method)Īpple Logo > System Preferences > MySQL > Initialize Database, then type your new password and select 'Use legacy password'Īfter restart you should be able to connect.

Login to mysql server from terminal: run mysql -u root -p, then inside shell execute this command (replacing with your actual password):ĪLTER USER IDENTIFIED WITH mysql_native_password BY '' Įxit from mysql shell with exit and run brew services restart mysql.

Go to my.cnf file and in section add line:ĭefault-authentication-plugin=mysql_native_password mysql + homebrewīasically you will have to perform some actions manually, however- your database data won't be deleted like in solution below This is because Sequel Pro is not ready yet for a new kind of user login, as the error states: there is no driver.

Its strength is that it measures the divergence between an unknown probability distribution and a predicted distribution. I’ve use hinge loss and found it trained to higher accuracy for one problem.Ĭross entropy loss is the loss function most often used in machine learning. See this article for examples of standard loss functions:Įach loss function has its own justifications and characteristics, for example, how sharply they separate classes and how much they penalize outliers. So the function you posted above would work fine. It only needs to penalize the wrong guesses, reward the correct ones, and be mostly differentiable. As I understand it, many different functions can serve for a loss function. I am not a real mathematician, so experts feel free make corrections. This is why Binary-Cross-Entropy is often used for this, and it is using sigmoid instead of a softmax for this reason. If this is not true then softmax should not be used. Softmax passes through information in a way that says there’s only 1 label per image as they all sum to 1. This is why when you do multi label problems cross-entropy should be replaced with binary-cross-entropy. This makes sense in a single class and makes the space you need to search smaller. If 1 class chance increases, the one or more of the other classes decrease. So basically the reason why using the softmax (ie cross-entropy) is better for gradient descent, is because baked into the loss function is additional information. By the same reasoning, we may want the sum to be less than 1, if we don’t think any of the categories appear in an image. softmax, as we saw, requires that all predictions sum to 1, and tends to push one activation to be much larger than the others (due to the use of exp ) however, we may well have multiple objects that we’re confident appear in an image, so restricting the maximum sum of activations to 1 is not a good idea.Note that because we have a one-hot-encoded dependent variable, we can’t directly use nll_loss or softmax (and therefore we can’t use cross_entropy ): In PyTorch, this is available as nn.CrossEntropyLoss (which, in practice, actually does log_softmax and then nll_loss ): When we first take the softmax, and then the log likelihood of that, that combination is called cross-entropy loss. I had read them before, but they only made sense after what SamJoel said In addition to what SamJoel said, these 2 quotes from /fastai/fastbook proved very helpful to me. I am going to summarize what I think I learned. I have been looking, and your response helped a ton. I don’t really understand why this would make it easier or faster for the model to train.Ĭan anyone point me in the right direction for where I need to either expand or correct my understanding of this? My goal is to try to build an understanding of loss functions so that I can understand when and how they should be changed for specific problems. To me, this seems like it’s doing roughly the same thing just with an additional conversion. My understanding is cross-entropy does something similar, but rather than only putting each prediction between 0 and 1, it makes each prediction of this class a % likelihood (so all sum to 1). That seems to do what we want and the more we minimize it the more closely the model is matching the targets. We could have 1 image have multiple classes by having the target have multiple columns be ‘1’ in the same row. The less confident it is about a correct class, the more that adds to the loss as well. The more confident it is about a wrong class, the more it adds to loss. Why should I use cross-entropy rather than extending this? If I take prediction that are all between 0 and 1, why not just take the difference.įor example if we have 2 images we are classifying into 3 classes, we may have this: pred = tensor(,) Return torch.where(targets=1, 1-predictions, predictions).mean() In a single class classifier (taken from new Fastai book by Sylvain and Jeremy on /fastai/fastbook) it looks like this: def mnist_loss(predictions, targets): I am going to outline my thought process and what I think I know.Ī simple way to measure loss it to take the difference between the prediction (passed through a sigmoid so all are between 0 and 1) and my y_truth. I am hoping someone can help point me in the right direction. I am working on learning Neural Networks, and I am a bit unclear on the benefits of cross-entropy loss function for multi-class image classification.  |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed